All Models Are Wrong But Some Models Are Useful

Modelling (and I'm not talking about the catwalk variety!) is a long-standing method used to understand processes and phenomena. Astronomers created intricate mechanical models of the solar system, called orreries, to better understand how the planets moved relative to each other. For centuries boat builders used carefully made scale models to get the right "lines" for a hull and to test how they would behave in the water. Architects still construct scale models of major buildings to show what they will look like.

These models are all simplifications of the actual phenomena. Orreries usually assume that the planets travel in circular orbits instead of ellipses and boat models rarely have the fine detail of the planks. But these models can still be valuable. The well known industrial statistician George Box put it well when he said "all models are wrong but some models are useful".

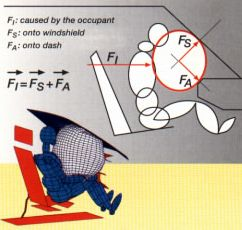

Today, most models are computer based and ideally use sound mathematical theory. The crash testing of motor vehicles is an example of this, that has affected all our lives. In the past, actual pre-production vehicles were tested by crashing them into barriers. This was time-consuming and very expensive, meaning that only a few tests would be done. Today, mathematical models on high-speed computers are used to simulate how a car might behave in a crash. This requires detailed equations for how each body panel may deflect or tear, how each weld might withstand the stress and how each mass might move. The result has exquisite detail on how the car might absorb a crash and how great the forces on the occupants might be. This detail has led to much safer cars, while at the same time reducing their weight by putting strength exactly where it is needed. This has the additional environmental benefit of reducing fuel consumption.

Sometimes modelling is the only acceptable way to go. Testing a mechanical heart valve in situ may put the patient's life at risk, while investigating a model is not life threatening.

The mix of mathematics and computing varies. For engineering problems, it is relatively easy to model a simple shape mathematically. This can lead to exact solutions without using computers. However, for many real problems the shapes are just too complex to solve with mathematics alone. Each small part can be solved simply but a computer is needed to tie it all together with a computational solution.

The examples given above, all have an engineering emphasis but modelling need not be restricted to that. One of the most fruitful areas of modelling targets processes, whether they are in business, manufacturing, transport or elsewhere. Examples of processes include:

- The operation of a call centre where incoming calls are routed to agents and handled through standard procedures;

- The management of a Court system where cases are lodged and go through a number of stages, possibly including a trial, before being resolved;

- A mineral processing plantsup where a continuous stream of ore goes though stages such as crushers, stockpiles, cyclones and screens to extract the minerals; and

- A port where ships must load or unload cargoes and link to land transport facilities.

These processes have a randomn component that creates additional complexity. For example, a call centre cannot control or predict when calls come in and in a mine the actual grade of ore can vary. This means that the model cannot simply predict a single scenario. At the same time, while the overall system might be complex, the individual parts of it might be relatively simple. It is putting together these simple components to form the whole that can be difficult, especially when a system has random inputs.

There are two ways to model such processes:

- Take a simple view of the process and fit mathematical theory to it (i.e. use mathematical equations to describe the constraints, operating rules and structure of the process). Generally when there are random inputs the model has to be made particularly simple to keep the mathematics "under control". In these cases assumptions are often made that we know are not strictly correct but may be acceptable if they dramatically simplify the mathematics. (Assuming normal distributions for inputs is a classic example of such an assumption.)

- Keep the complexities of the process and build a simulation model. While still based on mathematical theory, the simulation model can handle sophisticated systems without making as many assumptions or approximations.

Which method is best? Box's quote is relevant here - "the best is the most useful". Mathematical models sometimes provide a better understanding but simulation models can be more accurate.

Back when computing involved punch cards and camping by the computer overnight to get your program to run, simulation modelling was used as a last resort. However, today's computing power allows the processing of quite complex and large simulation models within a short period of time. Simulation modelling is often now the first (or in some cases, the only) approach considered when there is a need to understand a process. Computation has almost become a substitute for hard mathematical thinking.

Simulation modelling is strongly linked to computing, but it doesn't have to be. War games have been played out on boards for example, with pieces representing military units being physically moved around and sometimes a die being thrown to determine if someone gets hit. In this way simulation can assist in the development of military strategies without armies actually having to be involved in combat. However, computers make it easy to build simulation models of very large and complex systems. The sheer size is often enough to make them mathematically intractable. At the same time, mathematical and statistical expertise should be used in making the judgements on how to build the models.

Once it has been established that a simulation model is the right approach, one of the first steps is to gain an understanding of the workings of the system or process. This can involve documenting the operating rules, for example, what causes events to happen in the system, the relationships between different events and how they behave when they do occur. In mathematical terms, this establishes the equations that define the system.

The next stage is often overlooked and involves determining whether the inputs to the model should be synthetic or real. For some processes the parameters of the inputs can be estimated using statistical theory. This may use real data to estimate the parameters but, once this is done, unlimited inputs can be simulated. For example, ship arrivals at a loading/unloading facility can have certain statistical properties and the time between arrivals also follows a pattern or statistical distribution. Therefore in the model the ship arrivals can be described as an average inter-arrival rate with some variation structure. Service times of calls in a call centre could also be described using similar statistical concepts. These inputs need to be established correctly for the simulation model to replicate the real situation as close as possible, and can be calibrated against real measures (expected output). A further benefit of estimating these with statistical theory is that the simulation model could consider likely situations that are feasible but have not been experienced by the real system.

The need to fully understand the input structure is removed when actual inputs can be used, but it may limit how many simulations can be run. Weather is a classic example of a model input that may be best handled in this way. It has a complex structure but many locations have decades of weather data available. However if the task is to model rare weather related events such as major (once in a hundred years) floods, this approach will fail.

The last stage is to determine what the outputs or measures of the system will be. Often this is well defined by the original questions that started the study. With complex simulation there are sometimes dozens of measures that can be extracted. These may even require further statistical analysis themselves.

The power of the simulation model can be to understand a system through considering alternative scenarios and seeing how the outputs are altered by changing the structure or the inputs. For this, having repeatable measures enables the different scenarios to be compared.

With these three stages in place, the simulation model can be built. Once this required programming in standard computer languages. Then came some specialised languages such as GPSS and Simula [1]. Today there are a number of packages that allow at least some of the structure of a simulation to be set up graphically, with a generic set of simulation blocks that can be assembled to represent any discrete process or operation. Data Analysis Australia uses Extend for simulation modelling, as it allows the flexibility to directly program blocks if the available suite of simulation blocks isn't suitable to describe events in the model.

Simulation modelling is a valuable tool to use when needing to understand a complex process that can't simply be expressed in mathematical terms. In designing a simulation model, the involvement of statistical and mathematical experts is necessary to appropriately translate the concepts of the process or phenomena into the mathematical framework.

October 2004

Footnotes

[1] Simula developed by Ole-Johan Dahl and Kristen Nygaard at the Norwegian Computing Centre between 1962 and 1967. It was based upon the Algol 60 language and is usually credited with introducing all the concepts of object-oriented languages that are in use today.