Distilling the Essence from Large and Complex Data Sets

Technology has provided us with the means of collecting and storing vastly more data than in the past, creating the era of “big data”. Much of this data is also more complex than previously, typically less well structured, with more variables being recorded and greater issues of data quality. Understanding what the data tells us remains a challenge as the data is harder to visualise and computing power has not kept up with data storage power.

One aspect of big data is the growth in the number of variables. In genetics, every point on the genome can be recorded for each person in a study. In retail analysis, shoppers can choose any assortment of the thousands of lines a supermarket might carry. In electricity supply, it is now possible to record a household’s demand every few minutes.

These examples of data growing horizontally (more variables), not just vertically (more individuals) are often not well handled by traditional statistics and the last twenty years have seen growth in new methods. It has been an exciting time for statistics with advances in mathematical theory being accompanied by new algorithms and new practical approaches.

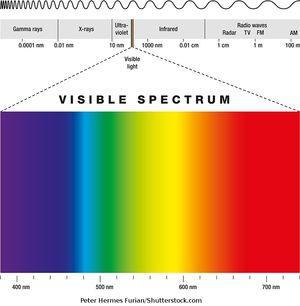

Data Analysis Australia has met this challenge by keeping abreast of these developments and recognising where they can assist our clients. An example is recent work for several clients who collected data using modern spectrographic instruments that record light from the visible through to the near infrared, providing reflectance measures at 2151 individual wavelengths. The applications included mineral processing, plant nutrition and geological surfaces. “Old statistics” would have led to models with thousands of parameters, but we were able to apply modern methods such as penalised regression to obtain meaningful analyses.

Another example comes from electricity data, where modern smart meters can generate enormous amounts of data. At one time, detailed data for consumption looked at half-hour intervals. Now it may be every second, potentially giving a whole new class of information not previously available. The key is to simplify the data without losing the critical detail, a classical statistical problem but here with masses of data.

Data Analysis Australia continues to explore new methods. We have for our internal research a dedicated cluster of high speed computers on which we can investigate new methods such as Apache Spark and parallel computation. What sets Data Analysis Australia apart in a world where many claim to be handling big data is our ability to combine big data with statistical rigour and a practical approach to solving clients’ problems.

For further information on how Data Analysis Australia can help you with complex data problems, please Contact Us.

March 2017